AI Data-Sharing Readiness Starts with a Simple Question

On 31 March 2026, the Australian government signed a memorandum of understanding with Anthropic that included something most governance teams have not yet planned for: direct access to the company’s economic-impact data. The agreement commits Anthropic to sharing usage telemetry across sectors, participating in joint safety evaluations through Australia’s AI Safety Institute and collaborating with Australian universities on research. In return, the Australian government gains a structured, ongoing view of how AI is adopted across its economy, what it does to productivity and what it means for workers.

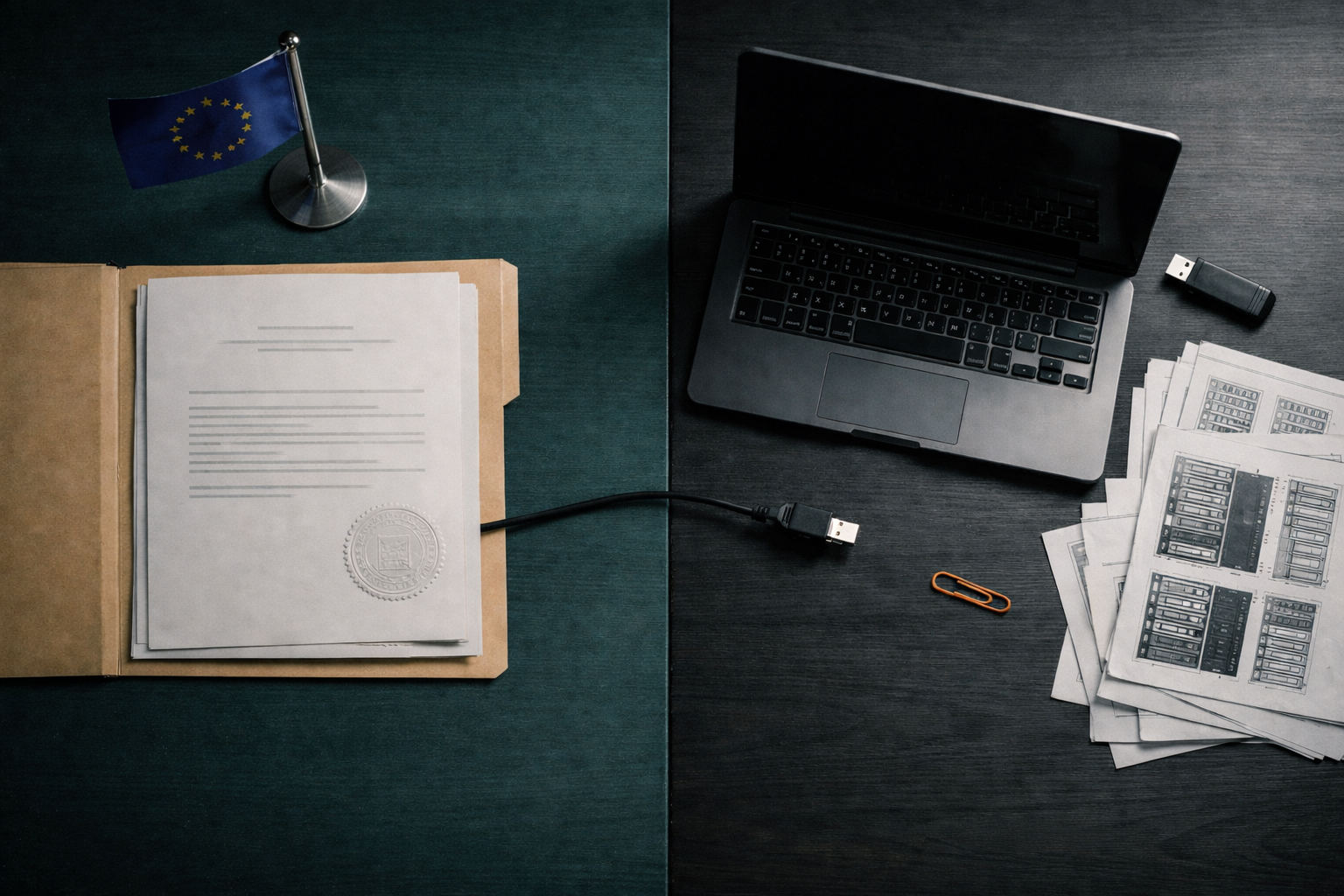

This is not a regulatory penalty. It is not an audit. It is a negotiated data-sharing arrangement between a sovereign government and a frontier AI provider; the first of its kind made public at this level of detail. For governance teams in the EU, it raises a practical question: if your regulator made a similar request tomorrow, could you respond?

What the Australia-Anthropic Deal Actually Contains

The MoU sits under Australia’s National AI Plan, launched in December 2025. It covers three areas. Safety cooperation comes first: Anthropic will share findings on model capabilities and risks with Australia’s AI Safety Institute, mirroring arrangements the company already maintains with safety institutes in the US, UK and Japan. Second, Anthropic’s Economic Index data will be shared with the Australian government, initially focused on natural resources, agriculture, healthcare and financial services. Third, the agreement includes AUD$3 million in API credits for four Australian research institutions working on genomics, paediatric medicine and computing education.

The safety cooperation follows a pattern already visible across several jurisdictions. However, the data-sharing commitment breaks newer ground. Anthropic’s Economic Index classifies AI usage by task, sector and occupation. It also distinguishes between AI used as an assistant and AI operating more autonomously. Sharing that data with a national government gives regulators a structured, sector-level view of how AI tools are actually used in practice. That represents a different kind of regulatory relationship from the one most organisations are accustomed to.

AI Data-Sharing Readiness and the EU Regulatory Parallel

The EU AI Act already requires providers of high-risk AI systems to maintain post-market monitoring systems under Article 72. These systems must collect, document and analyse performance data throughout the lifetime of an AI system. In addition, serious incidents must be reported to national authorities under Article 73. The EU AI Office, operational since 2024, holds exclusive supervisory powers over general-purpose AI models. It can request documentation, conduct evaluations and require corrective action from providers.

The Australian MoU goes further than compliance reporting. It establishes a cooperative data pipeline that is ongoing, structured and sector-specific. The EU has not formalised this approach yet, but the direction of travel is consistent. The AI Office is already building evaluation capabilities and developing codes of practice with model providers. The General-Purpose AI Code of Practice, received by the Commission in July 2025, sets out transparency, copyright and safety obligations. Meanwhile, member states are designating national competent authorities and market surveillance bodies.

If a member state or the AI Office decided to negotiate similar bilateral data-sharing arrangements with AI providers operating in Europe, the legal infrastructure to support that request is already in place. The open question is whether deployers’ internal infrastructure can match it.

What Your Governance Team Should Be Asking Now

Suppose your national regulator asked for three things: usage telemetry showing how AI tools are deployed across your organisation, job-impact data measuring how AI affects roles and workflows, and access to your safety-evaluation records. Most organisations cannot produce that information in a structured, auditable format today. AI data-sharing readiness requires internal controls that many governance teams have not yet built. Three areas matter most.

Usage Logging and Classification

Every AI system in use needs a logged record of what it does, who uses it, how frequently and in which business context. This goes beyond an IT inventory. It requires a classification framework that links AI usage to operational functions and risk categories. Without that structure, any external data request becomes a manual scramble rather than a routine extraction.

Workforce Impact Metrics

If a regulator asks how AI has changed staffing levels, task allocation or skill requirements in a specific department, the organisation needs a metrics pipeline that connects AI deployment data to workforce data. In practice, this means HR and AI governance must share a common reporting structure. Organisations that treat AI adoption and workforce planning as separate functions will struggle to produce coherent answers.

Incident and Safety Records

Post-market monitoring under the EU AI Act already demands structured incident records. Yet many organisations treat this as a compliance checkbox rather than an operational capability. A regulator requesting safety-evaluation access expects to see what was tested, when, by whom and what the findings were. Email threads and shared folders do not meet that standard.

Three Steps Governance Teams Can Take Now

First, audit your logging. Map every AI system in use and verify that usage data is captured in a format suitable for external sharing. If your logs exist only inside vendor dashboards you do not control, that is a gap worth closing now.

Second, connect AI deployment data to workforce reporting. Build or commission a reporting layer that links AI tool usage to role-level workforce metrics. This does not require a full workforce analytics platform. It requires a defined data schema and a regular reporting cadence.

Third, formalise your incident and evaluation records. Move safety evaluations and incident reports into a structured evidence architecture with version control, timestamps and sign-off records. The Australian MoU is a template, not an outlier. Governance teams that treat AI data-sharing readiness as a future concern will find it becomes a present one faster than expected. Start mapping your internal data flows now; the regulatory request will not wait for your architecture to catch up.

Newsletter

Releted Blogs

LATEST NEWS

AI governance is not a future problem

Regulation is already in effect. Your competitors are already building internal capability. The gap between ‘we are aware of AI’ and ‘we have operational control’ is closing, and it closes faster with a structured framework.

Book a 30-minute discovery call. No obligation. We will assess where your organisation stands and what a realistic starting point looks like.

No sales pressure. No jargon. Just a structured conversation about your organisation's AI readiness.